Greetings,

so i’m a long time MSSQL->MYSQL user.

I am preparing my brain and environment for migrating to mongodb.

I put a bit of stress test and now i cant figure out how to recover from that.

1.I put a script with fake name generator to fill the mounted disk with mongodb data.

2.When i woke up, it was obviously filled (5GB) with 60+ mil documents.

It was not starting,so i mounted another drive 8GB, i rsync mongodb to 8GB drive

and now its starts

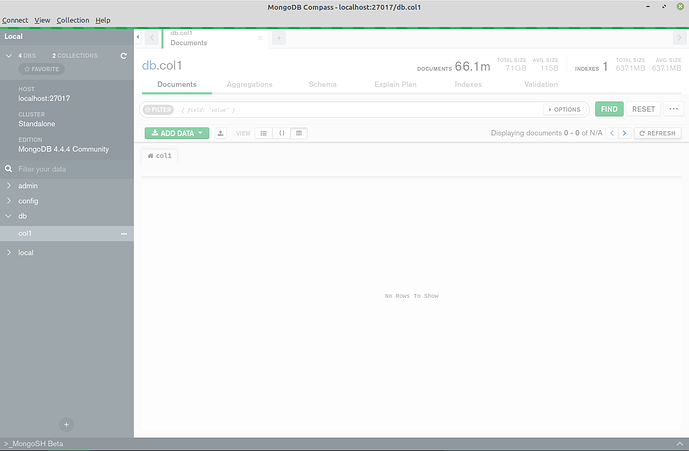

3.The problem is that in mongodb compas it was showing 0 - 20 of N/A

Atleast i seen the data

4.after mongodb -repair

0 - 0 of N/A

I don’t see any data.

Please advise what is the best practice to recover from this.

Thank you

LOGZ:

when i run repair

{"t":{"$date":"2021-04-08T09:45:43.547+02:00"},"s":"I", "c":"CONTROL", "id":23285, "ctx":"main","msg":"Automatically disabling TLS 1.0, to force-enable TLS 1.0 specify --sslDisabledProtocols 'none'"}

{"t":{"$date":"2021-04-08T09:45:43.551+02:00"},"s":"W", "c":"ASIO", "id":22601, "ctx":"main","msg":"No TransportLayer configured during NetworkInterface startup"}

{"t":{"$date":"2021-04-08T09:45:43.552+02:00"},"s":"I", "c":"NETWORK", "id":4648601, "ctx":"main","msg":"Implicit TCP FastOpen unavailable. If TCP FastOpen is required, set tcpFastOpenServer, tcpFastOpenClient, and tcpFastOpenQueueSize."}

{"t":{"$date":"2021-04-08T09:45:43.552+02:00"},"s":"I", "c":"STORAGE", "id":4615611, "ctx":"initandlisten","msg":"MongoDB starting","attr":{"pid":3408,"port":27017,"dbPath":"/media/ukro/data2/mongodb/","architecture":"64-bit","host":"work3"}}

{"t":{"$date":"2021-04-08T09:45:43.552+02:00"},"s":"I", "c":"CONTROL", "id":23403, "ctx":"initandlisten","msg":"Build Info","attr":{"buildInfo":{"version":"4.4.4","gitVersion":"8db30a63db1a9d84bdcad0c83369623f708e0397","openSSLVersion":"OpenSSL 1.1.1d 10 Sep 2019","modules":[],"allocator":"tcmalloc","environment":{"distmod":"debian10","distarch":"x86_64","target_arch":"x86_64"}}}}

{"t":{"$date":"2021-04-08T09:45:43.552+02:00"},"s":"I", "c":"CONTROL", "id":51765, "ctx":"initandlisten","msg":"Operating System","attr":{"os":{"name":"LinuxMint","version":"4"}}}

{"t":{"$date":"2021-04-08T09:45:43.552+02:00"},"s":"I", "c":"CONTROL", "id":21951, "ctx":"initandlisten","msg":"Options set by command line","attr":{"options":{"storage":{"dbPath":"/media/ukro/data2/mongodb/"}}}}

{"t":{"$date":"2021-04-08T09:45:43.552+02:00"},"s":"E", "c":"STORAGE", "id":20568, "ctx":"initandlisten","msg":"Error setting up listener","attr":{"error":{"code":9001,"codeName":"SocketException","errmsg":"Address already in use"}}}

{"t":{"$date":"2021-04-08T09:45:43.552+02:00"},"s":"I", "c":"REPL", "id":4784900, "ctx":"initandlisten","msg":"Stepping down the ReplicationCoordinator for shutdown","attr":{"waitTimeMillis":10000}}

{"t":{"$date":"2021-04-08T09:45:43.553+02:00"},"s":"I", "c":"COMMAND", "id":4784901, "ctx":"initandlisten","msg":"Shutting down the MirrorMaestro"}

{"t":{"$date":"2021-04-08T09:45:43.553+02:00"},"s":"I", "c":"SHARDING", "id":4784902, "ctx":"initandlisten","msg":"Shutting down the WaitForMajorityService"}

{"t":{"$date":"2021-04-08T09:45:43.553+02:00"},"s":"I", "c":"NETWORK", "id":4784905, "ctx":"initandlisten","msg":"Shutting down the global connection pool"}

{"t":{"$date":"2021-04-08T09:45:43.553+02:00"},"s":"I", "c":"NETWORK", "id":4784918, "ctx":"initandlisten","msg":"Shutting down the ReplicaSetMonitor"}

{"t":{"$date":"2021-04-08T09:45:43.553+02:00"},"s":"I", "c":"SHARDING", "id":4784921, "ctx":"initandlisten","msg":"Shutting down the MigrationUtilExecutor"}

{"t":{"$date":"2021-04-08T09:45:43.553+02:00"},"s":"I", "c":"CONTROL", "id":4784925, "ctx":"initandlisten","msg":"Shutting down free monitoring"}

{"t":{"$date":"2021-04-08T09:45:43.553+02:00"},"s":"I", "c":"STORAGE", "id":4784927, "ctx":"initandlisten","msg":"Shutting down the HealthLog"}

{"t":{"$date":"2021-04-08T09:45:43.553+02:00"},"s":"I", "c":"STORAGE", "id":4784929, "ctx":"initandlisten","msg":"Acquiring the global lock for shutdown"}

{"t":{"$date":"2021-04-08T09:45:43.553+02:00"},"s":"I", "c":"-", "id":4784931, "ctx":"initandlisten","msg":"Dropping the scope cache for shutdown"}

{"t":{"$date":"2021-04-08T09:45:43.553+02:00"},"s":"I", "c":"FTDC", "id":4784926, "ctx":"initandlisten","msg":"Shutting down full-time data capture"}

{"t":{"$date":"2021-04-08T09:45:43.553+02:00"},"s":"I", "c":"CONTROL", "id":20565, "ctx":"initandlisten","msg":"Now exiting"}

{"t":{"$date":"2021-04-08T09:45:43.553+02:00"},"s":"I", "c":"CONTROL", "id":23138, "ctx":"initandlisten","msg":"Shutting down","attr":{"exitCode":48}}

mongodb log

{"t":{"$date":"2021-04-08T09:44:08.840+02:00"},"s":"W", "c":"COMMAND", "id":20525, "ctx":"conn11","msg":"Failed to gather storage statistics for slow operation","attr":{"opId":8415,"error":"lock acquire timeout"}}

{"t":{"$date":"2021-04-08T09:44:08.840+02:00"},"s":"I", "c":"COMMAND", "id":51803, "ctx":"conn11","msg":"Slow query","attr":{"type":"command","ns":"db.col1","appName":"MongoDB Compass","command":{"aggregate":"col1","pipeline":[{"$match":{"maxTimeMS":1111111}},{"$skip":0},{"$group":{"_id":1,"n":{"$sum":1}}}],"cursor":{},"maxTimeMS":5000,"lsid":{"id":{"$uuid":"497f25d5-1ef9-429d-bad7-f344cbb5bb6b"}},"$db":"db"},"planSummary":"COLLSCAN","numYields":5626,"queryHash":"37ECF029","planCacheKey":"37ECF029","ok":0,"errMsg":"Error in $cursor stage :: caused by :: operation exceeded time limit","errName":"MaxTimeMSExpired","errCode":50,"reslen":160,"locks":{"ReplicationStateTransition":{"acquireCount":{"w":5628}},"Global":{"acquireCount":{"r":5628}},"Database":{"acquireCount":{"r":5628}},"Collection":{"acquireCount":{"r":5628}},"Mutex":{"acquireCount":{"r":2}}},"protocol":"op_msg","durationMillis":5010}}