Replica set primary node:

rs.conf()

customer-replica-set:PRIMARY> rs.conf()

{

“_id” : “customer-replica-set”,

“version” : 2,

“protocolVersion” : NumberLong(1),

“writeConcernMajorityJournalDefault” : true,

“members” : [

{

“_id” : 0,

“host” : “customer-replica-set-0.customer-replica-set-svc.mongodb.svc.cluster.local:27017”,

“arbiterOnly” : false,

“buildIndexes” : true,

“hidden” : false,

“priority” : 1,

“tags” : {

},

"horizons" : {

"customer-prod-db" : "ec2-18-216-32-24.us-east-2.compute.amazonaws.com:31671"

},

"slaveDelay" : NumberLong(0),

"votes" : 1

},

{

"_id" : 1,

"host" : "customer-replica-set-1.customer-replica-set-svc.mongodb.svc.cluster.local:27017",

"arbiterOnly" : false,

"buildIndexes" : true,

"hidden" : false,

"priority" : 1,

"tags" : {

},

"horizons" : {

"customer-prod-db" : "ec2-3-17-154-122.us-east-2.compute.amazonaws.com:32595"

},

"slaveDelay" : NumberLong(0),

"votes" : 1

},

{

"_id" : 2,

"host" : "customer-replica-set-2.customer-replica-set-svc.mongodb.svc.cluster.local:27017",

"arbiterOnly" : false,

"buildIndexes" : true,

"hidden" : false,

"priority" : 1,

"tags" : {

},

"horizons" : {

"customer-prod-db" : "ec2-18-218-2-179.us-east-2.compute.amazonaws.com:30432"

},

"slaveDelay" : NumberLong(0),

"votes" : 1

}

],

"settings" : {

"chainingAllowed" : true,

"heartbeatIntervalMillis" : 2000,

"heartbeatTimeoutSecs" : 10,

"electionTimeoutMillis" : 10000,

"catchUpTimeoutMillis" : -1,

"catchUpTakeoverDelayMillis" : 30000,

"getLastErrorModes" : {

},

"getLastErrorDefaults" : {

"w" : 1,

"wtimeout" : 0

},

"replicaSetId" : ObjectId("5f388cc3900792e0998729e1")

}

}

rs.status()

rs.status()

{

“set” : “customer-replica-set”,

“date” : ISODate(“2020-08-16T13:51:01.340Z”),

“myState” : 1,

“term” : NumberLong(3),

“syncingTo” : “”,

“syncSourceHost” : “”,

“syncSourceId” : -1,

“heartbeatIntervalMillis” : NumberLong(2000),

“majorityVoteCount” : 2,

“writeMajorityCount” : 2,

“optimes” : {

“lastCommittedOpTime” : {

“ts” : Timestamp(1597585859, 1),

“t” : NumberLong(3)

},

“lastCommittedWallTime” : ISODate(“2020-08-16T13:50:59.046Z”),

“readConcernMajorityOpTime” : {

“ts” : Timestamp(1597585859, 1),

“t” : NumberLong(3)

},

“readConcernMajorityWallTime” : ISODate(“2020-08-16T13:50:59.046Z”),

“appliedOpTime” : {

“ts” : Timestamp(1597585859, 1),

“t” : NumberLong(3)

},

“durableOpTime” : {

“ts” : Timestamp(1597585859, 1),

“t” : NumberLong(3)

},

“lastAppliedWallTime” : ISODate(“2020-08-16T13:50:59.046Z”),

“lastDurableWallTime” : ISODate(“2020-08-16T13:50:59.046Z”)

},

“lastStableRecoveryTimestamp” : Timestamp(1597585840, 8),

“lastStableCheckpointTimestamp” : Timestamp(1597585840, 8),

“electionCandidateMetrics” : {

“lastElectionReason” : “stepUpRequestSkipDryRun”,

“lastElectionDate” : ISODate(“2020-08-16T01:45:37.715Z”),

“termAtElection” : NumberLong(3),

“lastCommittedOpTimeAtElection” : {

“ts” : Timestamp(1597542328, 1),

“t” : NumberLong(2)

},

“lastSeenOpTimeAtElection” : {

“ts” : Timestamp(1597542328, 1),

“t” : NumberLong(2)

},

“numVotesNeeded” : 2,

“priorityAtElection” : 1,

“electionTimeoutMillis” : NumberLong(10000),

“priorPrimaryMemberId” : 1,

“numCatchUpOps” : NumberLong(27017),

“newTermStartDate” : ISODate(“2020-08-16T01:45:37.761Z”),

“wMajorityWriteAvailabilityDate” : ISODate(“2020-08-16T01:45:38.278Z”)

},

“members” : [

{

“_id” : 0,

“name” : “customer-replica-set-0.customer-replica-set-svc.mongodb.svc.cluster.local:27017”,

“ip” : “192.168.22.100”,

“health” : 1,

“state” : 1,

“stateStr” : “PRIMARY”,

“uptime” : 43532,

“optime” : {

“ts” : Timestamp(1597585859, 1),

“t” : NumberLong(3)

},

“optimeDate” : ISODate(“2020-08-16T13:50:59Z”),

“syncingTo” : “”,

“syncSourceHost” : “”,

“syncSourceId” : -1,

“infoMessage” : “”,

“electionTime” : Timestamp(1597542337, 1),

“electionDate” : ISODate(“2020-08-16T01:45:37Z”),

“configVersion” : 2,

“self” : true,

“lastHeartbeatMessage” : “”

},

{

“_id” : 1,

“name” : “customer-replica-set-1.customer-replica-set-svc.mongodb.svc.cluster.local:27017”,

“ip” : “192.168.80.99”,

“health” : 1,

“state” : 2,

“stateStr” : “SECONDARY”,

“uptime” : 43519,

“optime” : {

“ts” : Timestamp(1597585859, 1),

“t” : NumberLong(3)

},

“optimeDurable” : {

“ts” : Timestamp(1597585859, 1),

“t” : NumberLong(3)

},

“optimeDate” : ISODate(“2020-08-16T13:50:59Z”),

“optimeDurableDate” : ISODate(“2020-08-16T13:50:59Z”),

“lastHeartbeat” : ISODate(“2020-08-16T13:51:00.605Z”),

“lastHeartbeatRecv” : ISODate(“2020-08-16T13:51:00.876Z”),

“pingMs” : NumberLong(0),

“lastHeartbeatMessage” : “”,

“syncingTo” : “customer-replica-set-2.customer-replica-set-svc.mongodb.svc.cluster.local:27017”,

“syncSourceHost” : “customer-replica-set-2.customer-replica-set-svc.mongodb.svc.cluster.local:27017”,

“syncSourceId” : 2,

“infoMessage” : “”,

“configVersion” : 2

},

{

“_id” : 2,

“name” : “customer-replica-set-2.customer-replica-set-svc.mongodb.svc.cluster.local:27017”,

“ip” : “192.168.33.103”,

“health” : 1,

“state” : 2,

“stateStr” : “SECONDARY”,

“uptime” : 43525,

“optime” : {

“ts” : Timestamp(1597585859, 1),

“t” : NumberLong(3)

},

“optimeDurable” : {

“ts” : Timestamp(1597585859, 1),

“t” : NumberLong(3)

},

“optimeDate” : ISODate(“2020-08-16T13:50:59Z”),

“optimeDurableDate” : ISODate(“2020-08-16T13:50:59Z”),

“lastHeartbeat” : ISODate(“2020-08-16T13:51:00.659Z”),

“lastHeartbeatRecv” : ISODate(“2020-08-16T13:50:59.778Z”),

“pingMs” : NumberLong(0),

“lastHeartbeatMessage” : “”,

“syncingTo” : “customer-replica-set-0.customer-replica-set-svc.mongodb.svc.cluster.local:27017”,

“syncSourceHost” : “customer-replica-set-0.customer-replica-set-svc.mongodb.svc.cluster.local:27017”,

“syncSourceId” : 0,

“infoMessage” : “”,

“configVersion” : 2

}

],

“ok” : 1,

“$clusterTime” : {

“clusterTime” : Timestamp(1597585859, 1),

“signature” : {

“hash” : BinData(0,“AAAAAAAAAAAAAAAAAAAAAAAAAAA=”),

“keyId” : NumberLong(0)

}

},

“operationTime” : Timestamp(1597585859, 1)

}

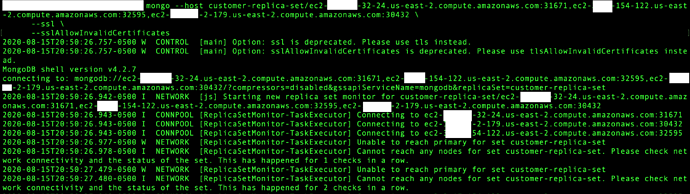

Connecting to the single host is the same issue:

mongo --host customer-replica-set/ec2-18-216-32-24.us-east-2.compute.amazonaws.com:31671 --tls --tlsAllowInvalidCertificates --verbose

MongoDB shell version v4.2.7

connecting to: mongodb://ec2-18-216-32-24.us-east-2.compute.amazonaws.com:31671/?compressors=disabled&gssapiServiceName=mongodb&replicaSet=customer-replica-set

2020-08-16T09:13:33.132-0500 D1 NETWORK [js] Starting up task executor for monitoring replica sets in response to request to monitor set: customer-replica-set/ec2-18-216-32-24.us-east-2.compute.amazonaws.com:31671

2020-08-16T09:13:33.133-0500 I NETWORK [js] Starting new replica set monitor for customer-replica-set/ec2-18-216-32-24.us-east-2.compute.amazonaws.com:31671

2020-08-16T09:13:33.135-0500 I CONNPOOL [ReplicaSetMonitor-TaskExecutor] Connecting to ec2-18-216-32-24.us-east-2.compute.amazonaws.com:31671

2020-08-16T09:13:33.218-0500 W NETWORK [ReplicaSetMonitor-TaskExecutor] Unable to reach primary for set customer-replica-set

2020-08-16T09:13:33.219-0500 I NETWORK [ReplicaSetMonitor-TaskExecutor] Cannot reach any nodes for set customer-replica-set. Please check network connectivity and the status of the set. This has happened for 1 checks in a row.

telnet ec2-18-216-32-24.us-east-2.compute.amazonaws.com 31671

Trying 18.216.32.24…

telnet: connect to address 18.216.32.24: Connection refused

telnet: Unable to connect to remote host

Deployed an nginx pod to make sure that it is not SG related:

kubectl get services -w

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

customer-replica-set-0 NodePort 10.100.66.6 27017:31671/TCP 12h

customer-replica-set-1 NodePort 10.100.185.237 27017:32595/TCP 12h

customer-replica-set-2 NodePort 10.100.37.222 27017:30432/TCP 12h

customer-replica-set-svc ClusterIP None 27017/TCP 12h

mynginxsvc NodePort 10.100.121.119 80:30180/TCP 3m28s

operator-webhook ClusterIP 10.100.40.145 443/TCP 13h

ops-manager-db-svc ClusterIP None 27017/TCP 13h

ops-manager-svc ClusterIP None 8080/TCP 13h

ops-manager-svc-ext LoadBalancer 10.100.146.190 a326d1d4fefc844e49d9da6d8ce1f229-105300929.us-east-2.elb.amazonaws.com 8080:30187/TCP 13h

telnet ec2-3-17-154-122.us-east-2.compute.amazonaws.com 30180

Trying 3.17.154.122…

Connected to ec2-3-17-154-122.us-east-2.compute.amazonaws.com.

Escape character is ‘^]’.

@Pavel_Duchovny Seems like this is something specific to MongoDB Operator and the deployment. I would suggest that you run through the same deployment on an EKS cluster and let me know what you find since this should be pretty straight forward for accessing thru node port.